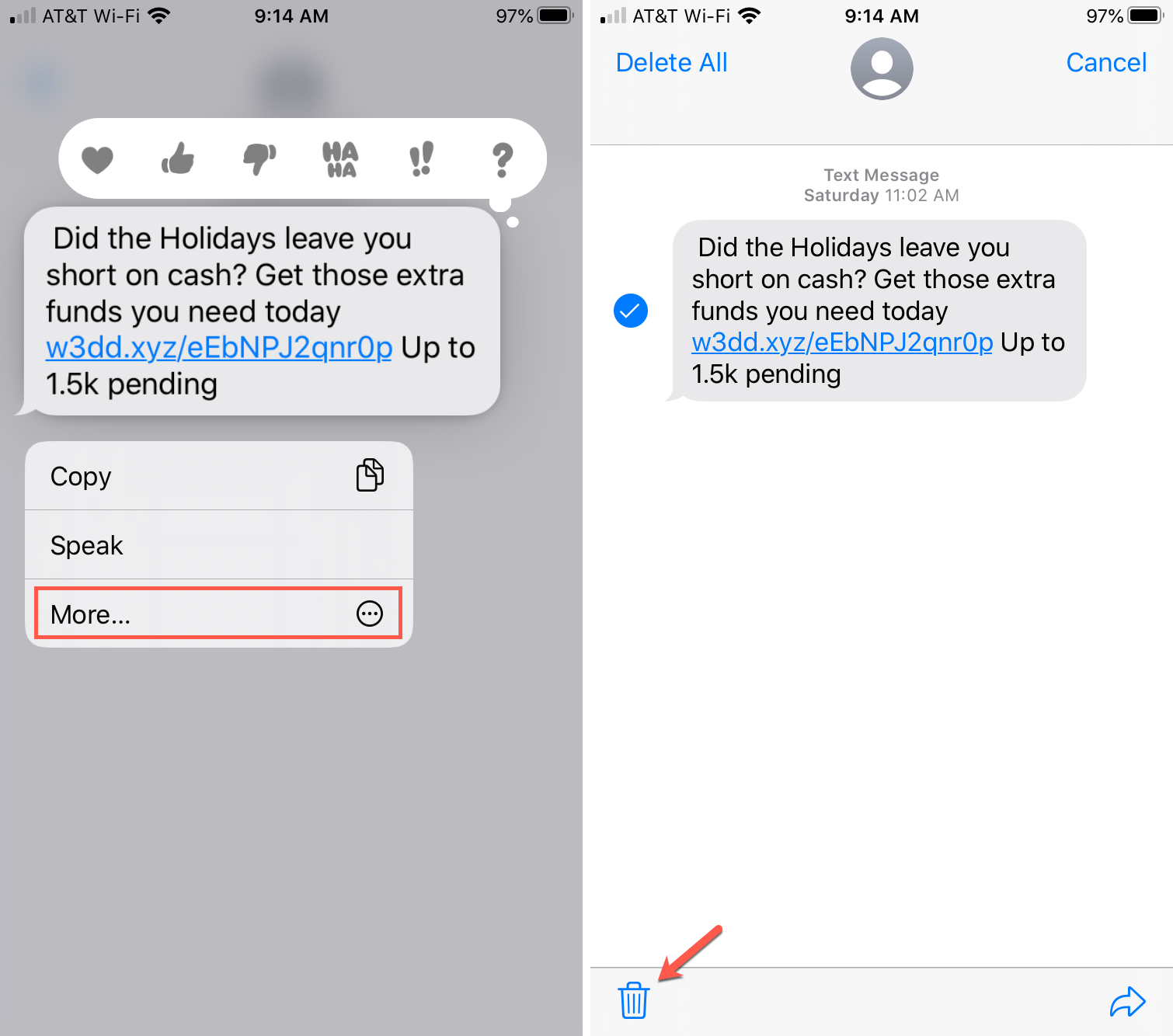

CLOSEST ASCII REPRESENTATIONĪbbreviated from American Standard Code for Information Interchange, this is a character encoding just like Unicode. This may be the case with many such words, which are included from different languages in English. Notice the ‘u’ has been encoded and we have to convert it into a normal character described by ASCII as the former will not be recognised as an English Language letter and will be discarded. There are different encodings such as UTF-8, UTF-32 and so on. Text having letters encoded with Unicode characters, different Unicode for different letters. Notice that every operation has been carried out, and then we have been provided with the output. Two main methods, as discussed, are shown below, firstly.Ĭleantext.clean("the_text_input_by_you", all= True)Ĭleantext.clean_words('Your s$ample !!!! tExt3% to cleaN566556+2+59*/133 wiLL GO he123re', all=True) This will return the text in string format.Ĭleantext.clean("your_raw_text_here", all= True)Ĭleantext.clean_words("your_raw_text_here", all= True) Application using Examples import nltkĪs mentioned earlier, there are two methods which we can use these are as below. We’ll need to leverage stopwords from the NLTK library to use in our implementation.

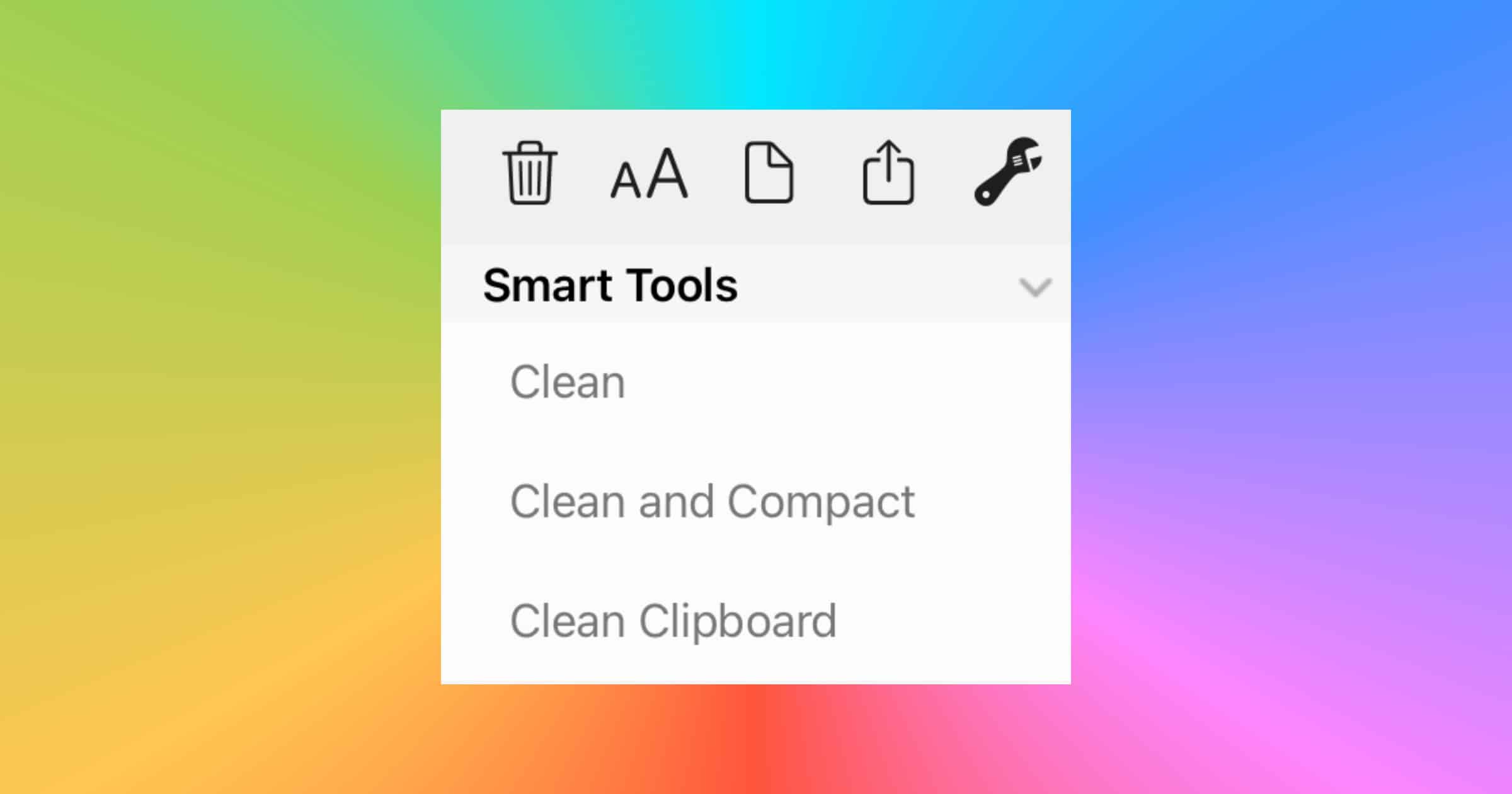

Code Implementation of CleanText InstallationĬleanText package requires Python3 and NLTK for execution.įor installing using pip, use the following command. For example, eat, eats, eating, eaten belong to the stem word eat and hence be converted to that.Įnough introduction let’s see how to install and use clean text. Stemming is a process in which we need to convert words with similar meaning or a common stem into a single word.Removing the stopwords, also choose a language for applying stopwords.Converting the entire text to a uniform lowercase structure.A list of those are mentioned below, and we’ll later write some code showcasing all of that for better understanding. The beautiful thing about the CleanText package is not the amount of operations it supports but how easily you can use them. clean_words: same as above, cleaning raw text but will return a list of clean words (even better ).clean: perform cleaning on raw text and then return the cleaned text in the form of a string.So there are two methods (yeah, mainly there are only two in this case), namely: Simple, easy to use package with minimalistic code to write with a ton of features to leverage (we all want that, right?).

CleanText is an open-source python package (common for almost every package we see) specifically for cleaning raw data (as the name suggests and I believe you might have guessed). This blog is about such a new library (released only last year, January 2020) called CleanText. Using NLTK and Regex is known all over the community so much that we often undermine what else is really there that we can use for this hefty task. The Python Community hosts a ton of libraries to make data orderly and umm…legible? This can vary from never-ending data frames to stylizing them or whether it be analyzing datasets. The task is to make this crucial and vital task more bearable (at least a little more bearable). Yeah, it’s enjoyable.īut we know that data cleaning is time-consuming, right? Also, lots of tools have popped up from time to time. Unfortunately, approximately 50-55% find it quite enjoyable. So messy that in a survey, it was mentioned that data scientists spend around 60% of their time cleaning data. Everyone has different opinions, but they can’t help but agree on this fact! What else is messy? Data !! Lots and lots of data which we collect, scrape or extract from numerous sources.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed